Subfuse Postmortem

Style

We were initially planning on having a full PBR asset pipeline with textures for color/roughness/metallic/normals. Using cubemaps for reflections, in addition to baked light maps. When we were about half way through the jam duration, we had just started to get the asset pipeline setup from Blender and it didn't seem like there was enough time to both author all of the art needed, as well as work out any inevitable kinks we would find while dealing with the number of assets there were, while trying to keep things as performant as possible.

That's when we decided on the retro aesthetic you can see in the game now. We would only bake the lighting information with the color included and roughness approximated. This would significantly simplify both the asset creation process, as well as the asset pipeline.

Thankfully, the Blender Cycles renderer has an option in the performance settings for pixel size, so we could get an idea of what it would look like in game. In Bevy, I setup the main camera to render to a texture, and resized that texture based on the window size. It was set up so that it would try to have the final resolution be between 256 and 512 pixels tall, but always picking an integer multiple for the pixel size to keep things crisp. Because aliasing is more apparent at these resolutions, it renders at double the final emulated resolution, and uses 2x SSAA (super sample anti aliasing) in the post process fragment shader. Since I didn't need to have interpolated values for the input to the post process, I just used 4 textureLoad's per fragment instead relying on an unnecessary hardware sampler with textureSample.

Post Process

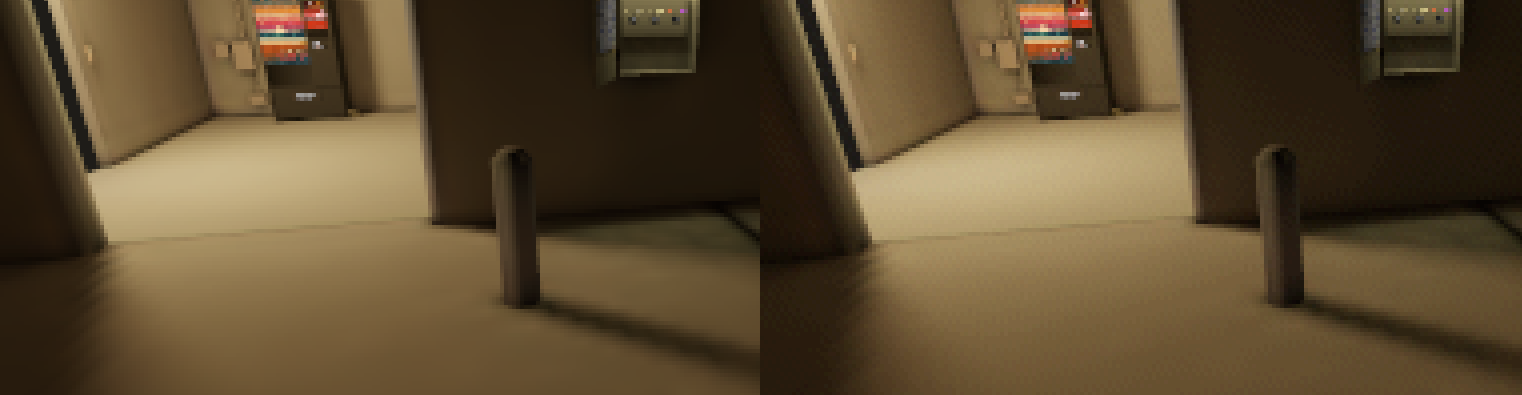

Here's a breakdown of the post process shader:

(the NO POST PROCESS image is from the editor mode, and the editor camera isn't the same FOV as the game camera)

The banding and dithering were applied heavily to try to somewhat emulate the feel of older games with limited palettes. This had the additional benefit of slightly improving look of the low resolution baked shadows, obscuring the jaggedness a bit.

Performance

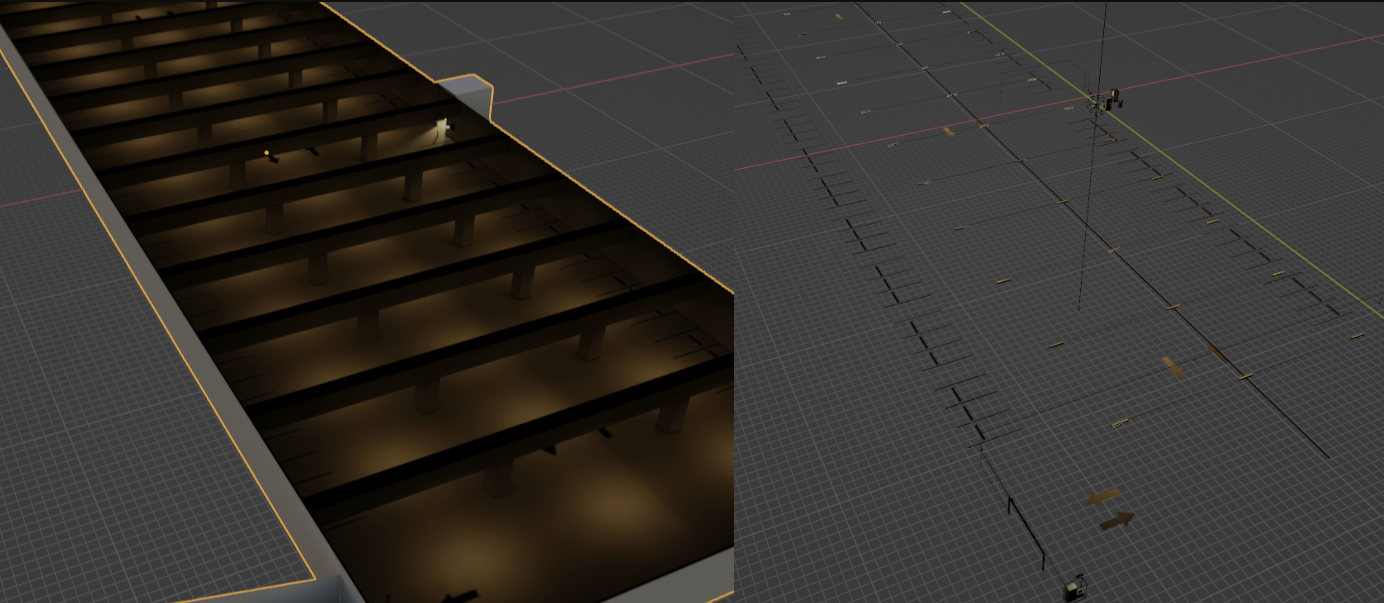

We wanted the game to be playable in the browser using an integrated GPU. There were a lot of things that went into making this possible. This was doable for our last game jam Confluence of Futility, but only if setting the graphics options as low as possible. The game made use of low resolution textures, and only used the light map, omitting all other textures. I was hoping this could be a more consistent experience, where players could get decent frame rates without the need for adjusting quality settings. This was going to be a challenge though as Subfuse was going to have significantly more geometry than Confluence of Futility did.

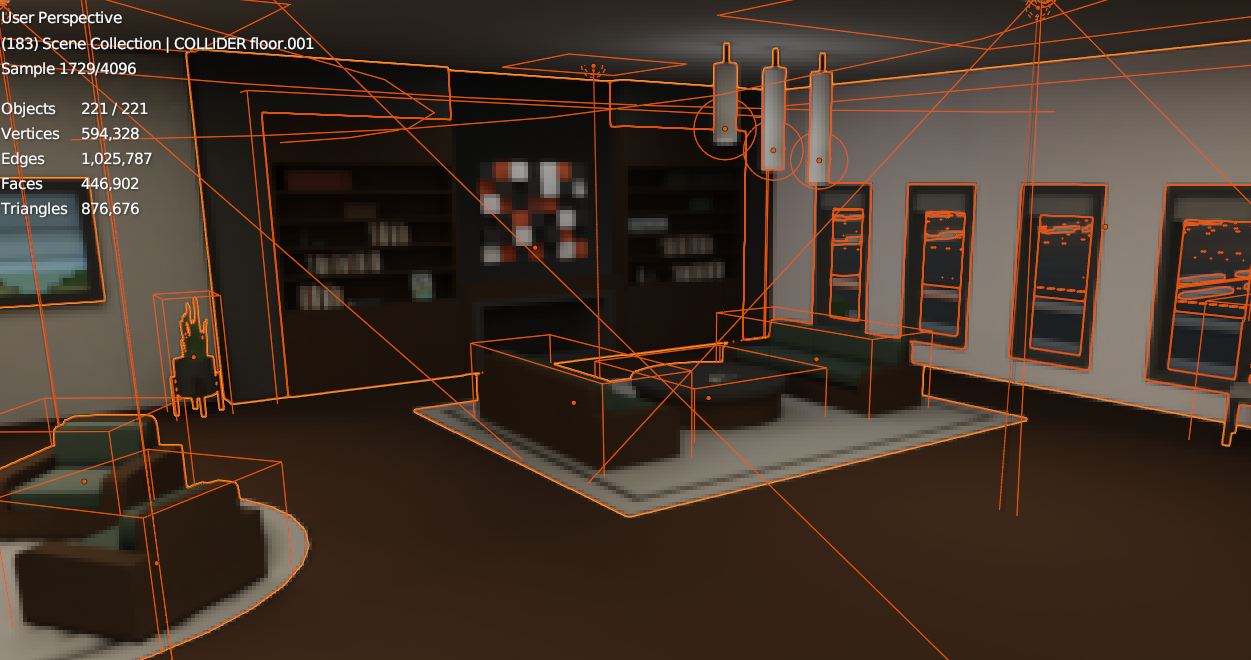

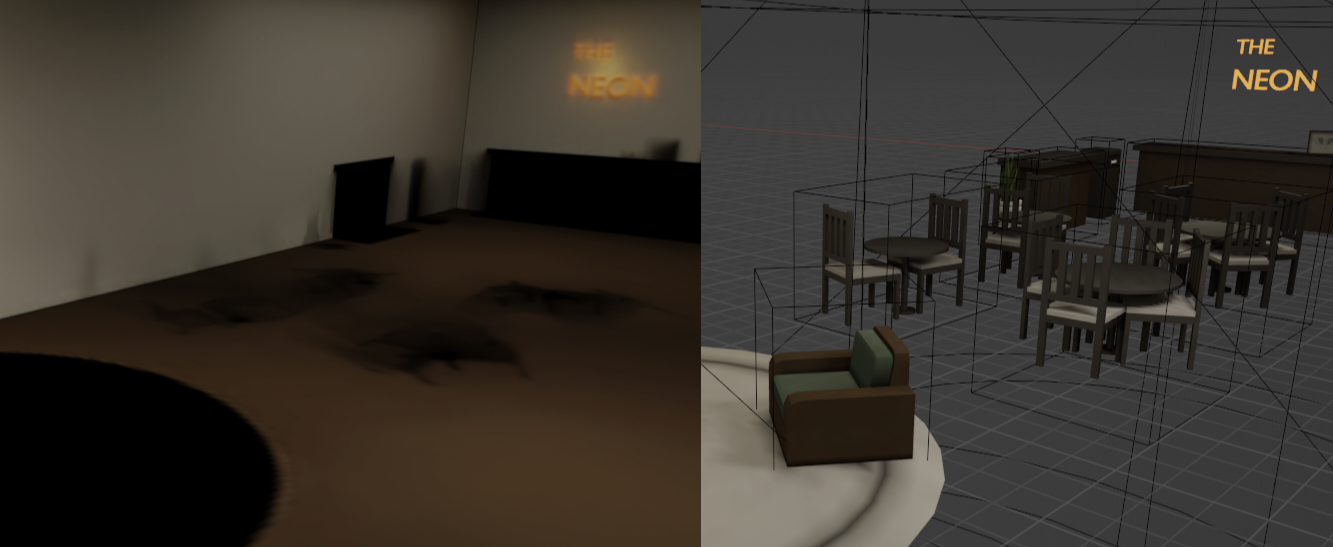

Bevy doesn't currently implement any batching or instancing when rendering 3d entitles. Each entity involves a separate draw call. This is problematic when trying to render thousands of entities, especially on low end hardware. To avoid this issue, we combined all of the static entities into just a few different groups. One group would have architectural elements (floor, walls, etc..) these are all very low poly, and their trimesh would be used directly for collision in Rapier. We would also use baked texture maps for lighting & color. For the smaller objects (props), we use cuboid colliders in Rapier, defined using cube style empty objects in Blender. For the props we baked the lighting and colors to vertex colors rather than texture maps.

Architectural elements using textures on the left, props using vertex colors on the right.

Getting all of this working involved spending an unfortunate amount of time writing python scripts for Blender that would automate the process of getting modifiers applied, entities joined into the groups, lighting baked, etc...

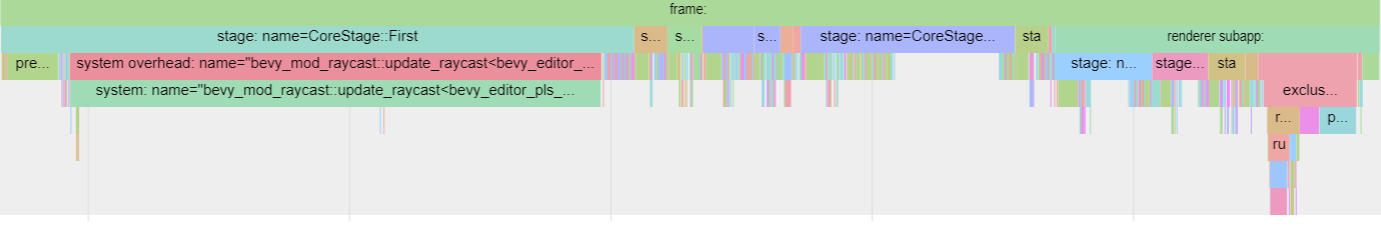

We seemed to be hard capped around 100fps on many different systems regardless of GPU. On our minimum target test setup in Chrome using an Intel UHD 630, we were at 40-60fps, but it was extremely stuttery. Looking at a trace, we found that a large percentage of the frame time was being used by bevy_mod_raycast which was being used by bevy_editor_plz for picking (selecting entities with the mouse).

I forked bevy_editor_plz with the picking functionality stripped out and we went from around 100fps max to 600-700fps on a GTX1060 and RTX3060. With the Chrome/Integrated setup we were at 80fps with no visible stutter. We eventually also set up the cargo.toml so the release build would not include bevy_editor_plz.

Frustum Culling

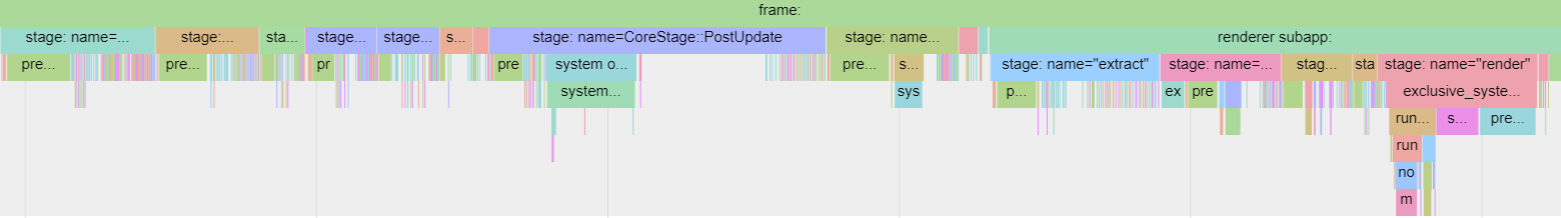

We then noticed that PostUpdate seemed to be taking a while. That turned out to be the frustum culling. Since we had so few individual renderable entities, and they were generally all around the player at all times, we disabled frustum culling altogether with the NoFrustumCulling component, and gained an additional 100fps or so on the GTX 1060 & RTX 3060.

Entity system

As we were using Blender for level design, we needed a way to mark which entities were collidable, doors, triggers, etc.

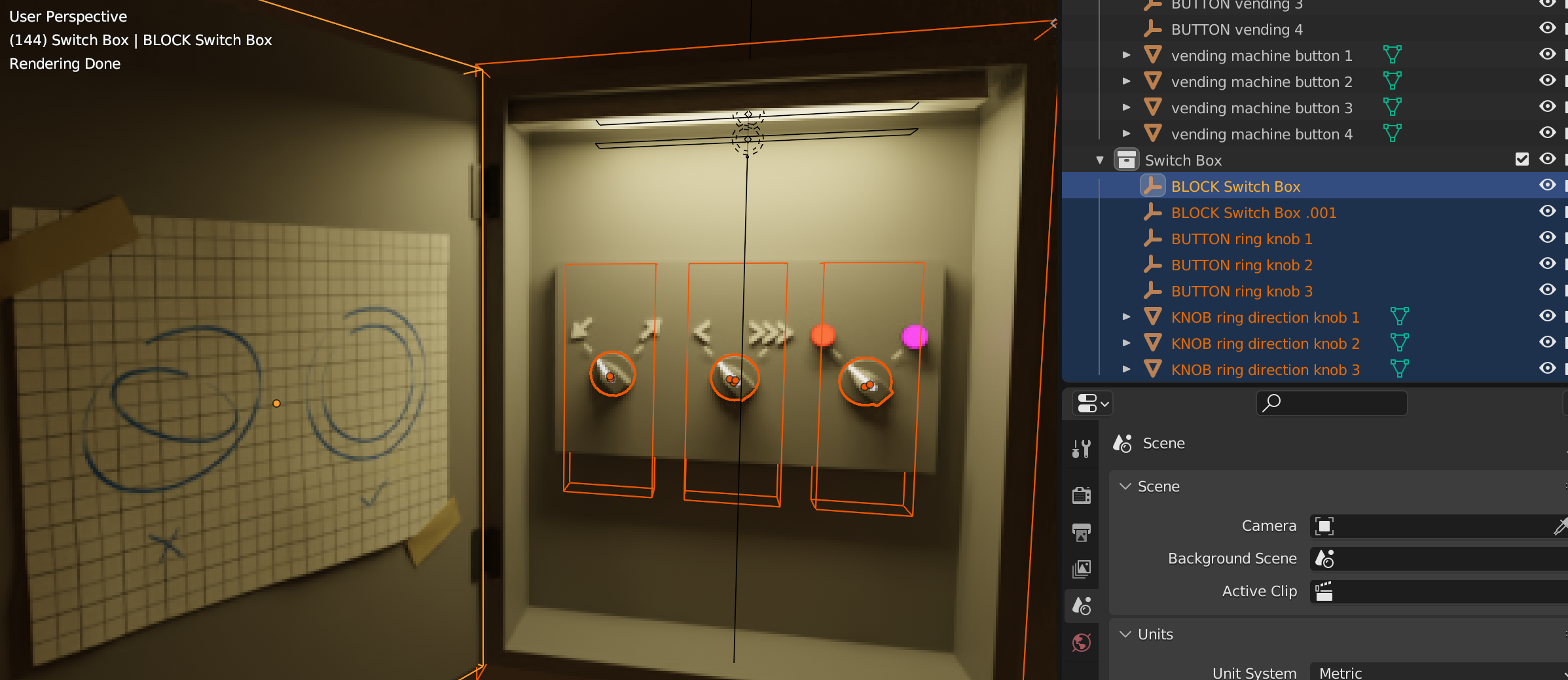

We did this with a naming system, where meshes were given a prefix name in Blender, and the game would treat the meshes based on the label.

For example, COLLIDER would add a collision box around the mesh and DOOR_LINEAR would let the mesh move linearly like a door. We could use Blender's custom properties to specify settings for each entity, such as how far the door should move, and how fast. With the right export settings the Blender, these properties conveniently show up in the gltf file as gltf-extras.

With this setup, a small system was written for each level to link behavior between the generated entities, such as opening/closing doors based on trigger enter/exit events.

The entities used for the game include:

- BUTTON: Emits events when hovered or clicked.

- TRIGGER: Emits events when the player enters/exits the region.

- BLOCK: A cuboid collider, useful for invisible walls, etc.

- COLLIDER: A tri-mesh physics collider.

- DOOR_LINEAR: Moves linearly based on open/closed state.

- TELEPORT: Teleports the player on touch to a destination.

- TELEPORT_DESTINATION: A destination the player can teleport to.

While we initially were using the teleports with the elevator, we ultimately decided to despawn/spawn the level around the elevator, rather than teleport the player.

We considered using the gltf-extras for defining the entity type, but ultimately liked the clarity and simplicity of seeing the all caps text in Blender's outliner rather than only knowing what it was set to when selected. I'm interested in looking more into what could be possible.

One of the downsides with this naming system is that for meshes, the entity with the mesh component actually has its own separate name, and the mesh entity's parent will have the name assigned to the object in Blender.

To get this all working, we made extensive use of a forked version of bevy_scene_hook.

What I would have done differently (Griffin):

I spent way too much time getting the asset pipeline setup. Probably about half of the jam. And realistically the pipeline could have been figured out ahead of time, then adapted the fit the actual theme/game. I had tested out a few ideas in preparation for the jam, but didn't have enough fleshed out, and hadn't setup something that would work at the scale needed for the game. Once the asset pipeline was ironed out, and we were getting comfortable with iteration, we were able to add content to the game very quickly. I was quite surprised how fast and productive we were able to be using Blender as an editor. Unfortunately, by the time we got to this point the jam was almost over. All the actual game mechanics, story, music, 90% of the sound effects, and a few whole levels, were all authored and added in the last few days of the game.

Get Subfuse

Subfuse

Escape the hotel

| Status | In development |

| Author | Griffin |

| Genre | Puzzle |

| Tags | 3D, Atmospheric, bevy, Exploration, First-Person, Retro, Singleplayer |

Comments

Log in with itch.io to leave a comment.

Hello, this is a fascinating post-mortem and I would love to talk to you about the asset pipeline in more detail! Do you all have discord?

Thank you for detailing the process. It is really appreciated, and will help save time later on in the future for bevy devs (like me). I had the fortune to play your game until the end, just browsing itch.io. By pure coincidence found this post-mortem. Wish you the best with any future projects. Had way too much fun playing it.